This project was completed during Fall 2007 for a course in the Human Computer Interaction program at Iowa State University.

Introduction

Jump to project demonstration video

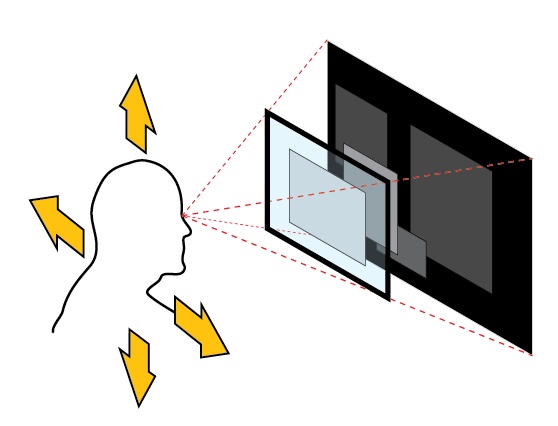

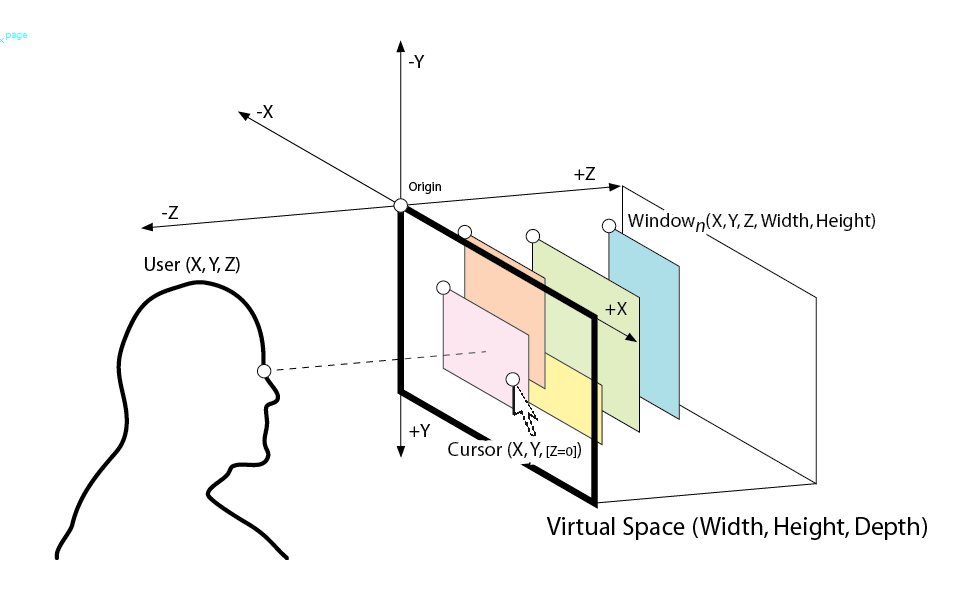

Imagine your monitor is a box one foot deep, and instead of all your applications being compressed to the front plane of the monitor, they are suspended at various depths inside this box. If you move your head to the side, you can peek around the application in front, and see a window that was hidden behind it. This is what I simulate with my project. The objective is to evoke a sense of depth without resorting to costly or clumsy stereoscopic display devices.

Perceiving Depth without Stereoscopy

There are many existing technologies that help simulate 3D space: VR goggles, anaglyph (red/cyan) glasses, LCD shutter glasses, lenticular lenses, and LCD displays with a striped barrier. All of these work on the principle of sending a different image to each eye. We perceive depth primarily through this difference. But we can perceive depth with one eye closed because there are several other cues to depth besides binocular vision. The lens action of the human eye automatically adjusts to focus on the subject, which means a solitary eye knows how far away the subject is, and in some sense we can perceive depth through awareness of this physiological response. We also perceive depth through the parallax motion that occurs between visually overlapping near and far objects when we move our head and our viewpoint changes. Near objects appear to move relative to far objects. This is the feature I want to exploit.

Consumer stereoscopic display technologies – in particular 3D shutter glasses, head-mounted displays, and parallax barrier LCD displays – are not well suited to prolonged usage or productivity applications where resolution is important. Users have to put up with large headgear, decreased resolution, flicker, and headaches when dealing with 3D display technology as it stands today. I want to bypass these inconveniences and provide a depth experience practical for everyday use.

Platform and Implementation Details

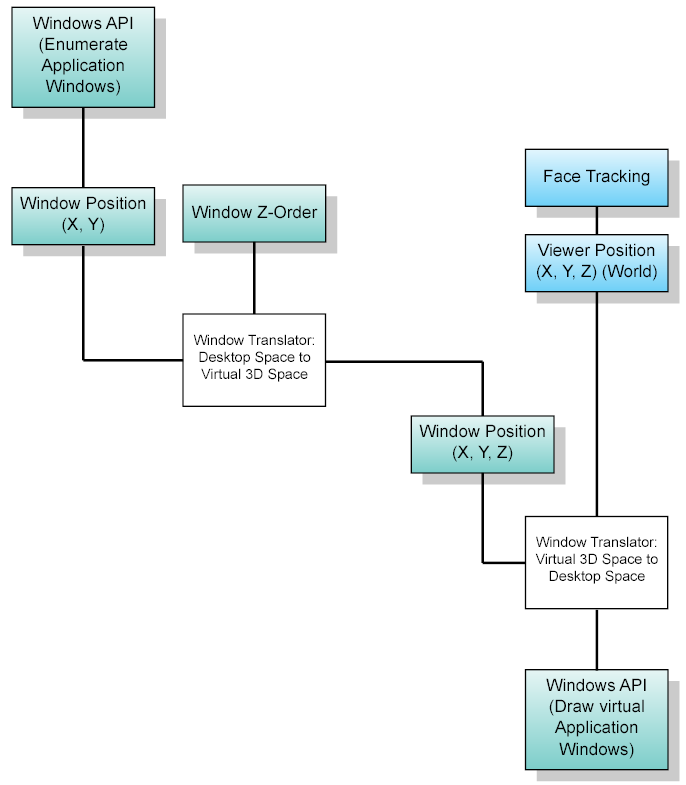

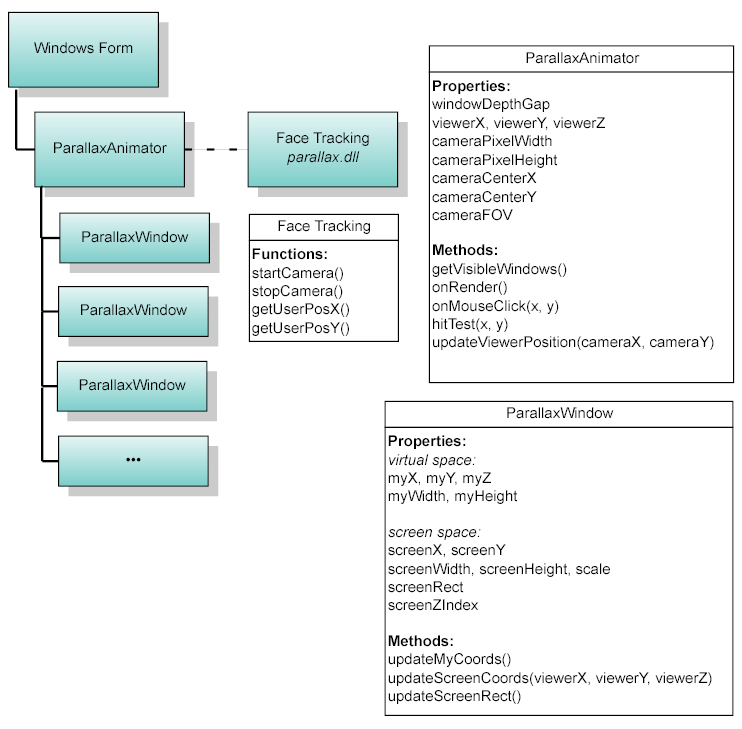

My application requires Windows Vista and a webcam. It makes use of the Desktop Window Manager composition engine introduced in Vista. You can paint a live snapshot of the client area of any open application with a Desktop Window Manager API call. This is a central requirement for simulating parallax motion as window areas must be translated and scaled continuously. Users need a DirectX 9 capable video accelerator to use the DWM. The DWM is similar to Quartz in Mac OS X in that applications do not draw directly to the on screen display buffer. The best example of how the DWM works is when using Windows Flip 3D. Since windows are 2D entities by design, in order for them to be shown in 3D properly, the 2D rendering needs to be transformed into 3D space. Using off-screen buffers means that the window is only rendered once it has been positioned in the 3D environment, saving excessive processing. This high level OS management of desktop composition is essential to parallax motion simulation. The concept could eventually be extended to other platforms that use managed desktop composition.

Data Flow

Object Model

Existing Products and Related Work

There are many research papers, software applications, and hardware devices (SmartNAV, Tracker Pro, Headmouse Extreme) devoted to controlling the user environment through head movements – moving a mouse cursor, clicking a button, etc. However, my application is not meant to drive the mouse cursor. In virtual reality game applications such as Microsoft Flight Simulator, head rotation is used to control the game environment, i.e., to allow the player to look around the cockpit using head movement. Aside from use along with a head mounted display, I believe this behavior does not at all facilitate immersion, and it is only an alternative control mechanism. See http://www.naturalpoint.com/trackir/02-products/product-TrackIR-3-Pro.html. Head rotation response is only appropriate in HMDs and should not be employed in fixed display systems. Programs where changes in head position (XYZ movement/translation) are used to create an immersive response are much more closely related to my application. See http://www.gesturecentral.com/useyourhead/specs.html.

The issues involved in face tracking for a perceptual user interface are well explained in an Intel research paper by Gary Bradski titled Computer Vision Face Tracking For Use in a Perceptual User Interface. The paper discusses the CAMSHIFT algorithm, introduced by Intel and included in OpenCV. CAMSHIFT is a speedy algorithm that is ideal for face tracking for user interfaces. The basic principle underlying the algorithm is to track a region of color. Other more precise face tracking methods, such as those employing Eigenfaces are too slow.

Usability Issues

Windows Environment

Many behaviors of the Windows operating system are not easily translated to a 3D space, where windows must behave as if they were sheets of paper floating in space, so there are several immediate practical concerns raised by the float windows application. I will try to address these concerns.

The application will not significantly change the behavior of the Windows environment. It should be suitable for continuous use in a productive setting. The idea of applications moving in response to the user’s head position might seem unusable at first. However, what I have in mind will keep the active application completely stationary in its native pixel resolution. No application window virtual position will come closer than the screen plane. (No window will appear to float in front of the monitor. All applications will appear to float behind the screen. )

Task Switching

Task switching is accomplished in the usual way; clicking an inactive application will bring it to the foreground.

The activated window is pulled out of its current level in the stack and moves smoothly to a Z-position of 0, maintaining its XY coordinates. Other applications recede to occupy the empty slot.

Drag & Drop

Drag and drop will be a problem since the actual background applications will be masked by the float window interface. Windows rendered in the float window interface are proxy images of the underlying applications. If a user attempts to drag a file from the foreground window to a background window, appropriate windows messages will not be sent to receiving applications unless drag and drop message forwarding is addressed in my application.

What happens with the Windows taskbar?

It remains in its normal position and appearance, and remains always on top, fixed at a virtual z-depth of 0 (at screen distance) so that it will never shift in response to user position. In other words, it doesn’t change at all.

What happens when applications are minimized or maximized?

Minimized applications disappear as usual. Nothing about maximized applications makes them incompatible with the 3D system - the window size is set to match the dimensions of the screen, and the position is locked. In my application this would behave as usual when the maximized application is in the foreground. When the maximized application loses focus, it would recede in space and appear to be reduced in size, but the user would not be able to see around it since it would continue fill the XY plane of the virtual space. Think of the maximized application moving back in the Z direction like the face of a piston receding down a cylinder. It fills the space. It would return to its maximized state when it receives focus again.

What happens when a user wants to read clear text from two applications at once?

The user will be able to pin applications to a z-depth of 0 (so that they don’t recede when they lose focus) by shift-clicking or some other method, similar to the “Always on top” window behavior.

How are the boundaries of the virtual area defined and enforced?

The virtual space will extend backward from the screen in perpendicular extrusion. XY position of the mouse cursor in the virtual space will be confined to the dimensions of the display resolution; i.e. 1024x768. The maximum z-depth will be a user option.

Prototype

I made a Flash prototype to demonstrate the essential behavior of the application. It is available online at http://www.mattmontag.com/hci575/project/. The prototype uses static images to show how the applications would shift on screen in response to head movement. The windows respond as if the user’s head moved directly in front of the mouse cursor. Move the mouse from side to side and try to keep your nose directly in front of the pointer. You will see that far-away applications are moving from side to side as if they were floating a few inches behind your LCD screen. Try to stare at the “foreground application” (the sharp image that doesn’t move) instead of the mouse pointer as you move your head. This is just for demonstration purposes – in lieu of a webcam actually tracking your head position. Do not let the role of the mouse cursor in this demonstration confuse you: mouse movement will not cause application windows to shift in the real application.

Demonstration

References

[1] G. R. Bradski. Computer video face tracking for use in a perceptual user interface. Intel Technology Journal, Q2 1998.

[2] M. Turk and A. Pentland. Eigenfaces for recognition. J. Cognitive Neuroscience, Vol. 3, No.1, 1991, pp.71-86.

[3] M. Hunke and A. Waibel. Face locating for tracking and for human-computer interaction. 28th Asilomar Conference on Signals, Systems & Computers, Pacific Grove, Calif. 1994

[4] Kay Talmi and Jin Liu, “Eye and gaze tracking for visually controlled interactive stereoscopic displays,” Signal Processing Image Communication, vol. 14, no. 10, pp. 799–810, 1999.

[5] A. Azarbayejani, T. Starner, B. Horowitz, and A. Pentland, “Visually controlled graphics,” IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 15, no. 6, pp. 602–605, 1993.

[6] Siegmund Pastoor, Jin Liu, and Sylvain Renault, “An experimental multimedia system allowing 3-D visualization and eye-controlled interaction without user-worn devices,” IEEE Transactions on Multimedia, vol. 1, no. 1, pp. 41–52, 1999.

Luke

/ June 3, 2012 QuoteWow this is so cool; it makes the windows on the outer monitor look old fashioned. Is there something like this on mac os x?

Chris

/ March 5, 2014 QuoteWow, this project is amazing. I would follow the process of coding something like this for myself, but I have neither the time or expertise. I think it would be a good decision to either make this an open-source program or sell it. I would pay for something like this.

Nibroc99

/ June 10, 2014 QuoteI'd love to see the same thing, but instead of tracking your face, use the position of the mouse on the screen. I'd love to have this on Windows 8.1!

Mel

/ May 25, 2016 QuoteHello, this is an awesome project! Is the code available anywhere?

Thanks!